Many researchers want their research to influence public policy Many charitable donors also want to influence / improve public policy and often fund the production of research and other activities to that end. Sometimes it works, other times it doesn’t. What raises the chances of success? And how can a donor or researcher predict which opportunities or approaches are likely to be fruitful? Giving Evidence was hired by a large foundation to find out. We worked with On Think Tanks, and are here sharing some of what we found and learnt. We hope that it is helpful to you!

The foundation funds the production of research and ‘evidence into policy’ (EIP) activities. It focuses on low-income countries. Most of the researchers whom it funds are based in high-income countries. Often those researchers form partnerships with public sector entities they seek to influence: those can be national government departments (e.g., department of education), central government functions (e.g., office of the president), other national public bodies (e.g., central bank), regional or local governments or municipalities. Those partnerships take many forms: varying in their resource-intensivity, cost and duration.

Our research comprised:

- Review of the literature about evidence-into-policy. This was not a systematic review, but rather we looked for documents, and insights within those documents, that are particularly relevant to the types of partnerships described. Our summary of the literature is here.

- Interviews with both sides: with various people in research-producing organisations (universities, non-profit research houses, think tanks and others), and some of their counterparts in governments and operational organisations. Summary of insights from our interviews is here.

We also did a lot of categorising and thinking.

First, all evidence-into-policy efforts must have these three steps:

A. Decide the research question

B. Answer the question. i.e., produce research to answer the question

C. Supporting / enabling implementation: e.g., help policymakers and decision-makers to find, understand and apply the implications of the

research; disseminate the findings; support with implementation.

We have found this categorisation useful in various ways, including:

- Checking that there is activity at all three stages. For example, if somebody does A and B but not C, the research is unlikely to have much effect. Equally, there are sometimes initiatives to identify unanswered or important research questions (A) but no capacity to then answer them (i.e., no proceeding to B or C).

- Research seems to be much more likely to influence policy or practice if policymakers or practitioners (‘the demand side’) are involved at A, i.e., in specifying the problem.

- But in much academic research, there are few/no policymakers or practitioners involved at A: the research question is decided purely based on what is ‘academically relevant’ or the academics’ interests. In that model, the researchers’ first main contact with people who might use the research is at C: and the research might be into a question which is of no interest to anybody else. We have sometimes called this approach: “here’s that report that you didn’t ask for”. It is hardly surprising if this model does not create much influence.

- Clearly, an organisation’s choice of what it does at each stage of ABC is a way of articulating its theory of change.

Some key findings

A first comment is that we see many organisations which run interventions at a small scale (and funders who fund them). We often advise funders to support more systemic work, which, if successful, will influence much larger, existing budgets and programs. We liken this to how a little tug can direct a massive tanker. Much good philanthropy is about tugs and tankers. This project was a welcome and important opportunity to think about the relative effectiveness of various types of tug. We found that:

- Organizations have diverse approaches / models for evidence-into-policy (i.e., theories of change) and therefore many different forms of partnerships. The organizations in the set vary considerably in their ABCs: for instance, at A, who is consulted and involved in determining the research questions, and whose priorities are involved? At B, who is involved in producing the research, eg., what countries are they from? At C, what dissemination channels are used, and what engagement is there with potential users?

- The most substantial partnerships that we found are between research-producers. Many of those (e.g., research networks) involve more frequent contacts than do partnerships between research-producers and policy-makers.

- Evidence of outcomes (the benefits) is scarce and patchy: Clearly, we understand that working on change at scale and/or doing system-related work often cannot be formally measured, in the sense of doing rigorous, well-controlled studies which indicate causation. Yet there could be more routine collation of outcomes (i.e., changes in the world which can reasonably be argued to be related to their work: we call these their ‘outcomes’.)

- It is hard to be precise about the costs (inputs) of the various organizations’ EIP work

- Unrestricted funding makes a big and positive difference. This is mainly because opportunities to influence policy often come up with quite short windows, so flexibility is key.

- We found considerable interest in becoming better at EIP – across funders, researchers, synthesers, distributors. This may be sign of growing sophistication in the field: whereas 10 years ago, the main focus was on producing research (rightly, as there was then so little), now it is more on influence and change and improving lives.

Much more detail is in the reports. We hope that they are useful to funders, research-producers, research-translators, and research-users!

Giving Evidence director Caroline Fiennes talked about these topics at the Global Evidence & Implementation Summit in Australia in 2018: video below. To watch, click on the photo and wait a second. You may need to log in – any email address is fine. Excuse the didgeridoo interruption!

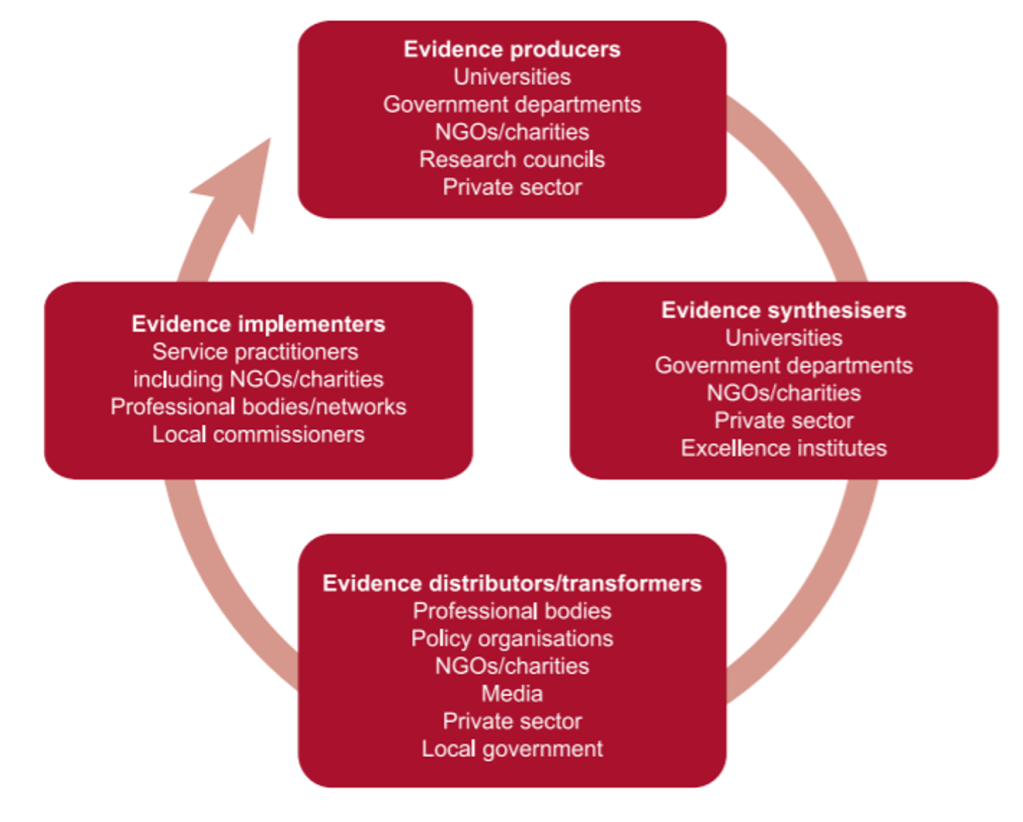

The Evidence System (framed by Prof Jonathan Shepherd):