First published in the Financial Times in August 2016.

Did the charity make it happen, or would it have happened anyway?

The purpose of a charity’s work — and your support for it — is to create a benefit beyond what would have happened anyway. This, of course, sounds obvious but (as my home discipline of physics powerfully attests) it can be useful to state the obvious because it can throw up some unexpected insights.

Charities are under considerable pressure to “demonstrate their impact”, yet few examine or recount what would have happened without them. “What difference does your work make beyond what would have happened anyway?” is perhaps the single most useful question that donors or trustees can ask.

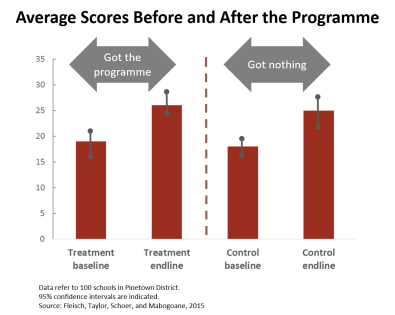

(Below: real data from a reading programme in KwaZulu Natal, South Africa. Evidently the programme achieved nothing.)

A few years ago, Goldman Sachs ran full-page adverts about its “10,000 Women” programme for female entrepreneurs proclaiming that “70 per cent of graduates surveyed have increased their revenues, and 50 per cent have added new jobs”.

Now, in terms of what would have happened anyway, this advert provides zero insight. Perhaps the programme helped those women, maybe it made no difference, or perhaps the women would have done better without it occupying their time.

Charities (and governments too) often purport to show their impact by comparing the situation before with the one after. Be sceptical of these claims. Simply showing what changed during the work says nothing about what changed because of the work.

Suppose that there is a poverty alleviation programme, and that some families can show their household income before and after it. Well, that shows nothing about what has changed because of the programme. Many factors changes in people’s lives, including during such a programme, any or all of which could have contributed to the change in household income observed.

Dean Karlan, a development economist at Yale University, is fond of saying that people normally get older during development programmes but that doesn’t show that the programmes cause ageing.

Comparisons of before and after are particularly misleading when they’re about work in education or youth development, because young people develop over time anyway. A 2014 study in KwaZulu-Natal, a province in South Africa, found that children on a reading catch-up programme saw their learning level improve by eight percentage points. Children who did not do the programme improved by almost precisely the same amount. (See graph above.) That shows that the improvement that happened during the programme was not because of the programme.

It can be hard to distinguish the effect of a programme from the effect of other factors. Sometimes a charity (or government) will use complicated measures to determine the before and after data, and the information can be rich. But don’t mistake detail for what researchers call an “identification strategy”, or a way of identifying what caused what.

This month alone, I’ve been approached by three sets of people with clever-sounding tools for “measuring impact”. One is a complicated, physics-like regression-analysis-type formula ostensibly for disentangling the relative contributions of various factors; and two are apparently ways of establishing the financial effect of programmes. None of them looks at what would have happened anyway, so they don’t ‘identify’ the effect of the programme. They won’t really tell us anything.

Furthermore, it’s imperative to look at what would have happened anyway to the specific people we’re talking about. For example, the Australian charity Fitted For Work (FFW) reports that “75 per cent of women who received wardrobe support and interview coaching from FFW find employment within three months … In comparison … about 48 per cent of women who rely on Australian federal job agencies find work within a three-month period.”

But the comparison probably isn’t valid. Women who find out about FFW and choose to ask for help are probably better networked and motivated than the average unemployed person helped by the federal agencies.

Those differences would create a “selection bias” in the women the charity serves, meaning that they would do better than the average person anyway. Identifying FFW’s impact requires seeing what would have happened anyway to those particular women.

We could see this if the charity supported some of the women who ask, but not others, and then compared how the two groups fared. If the groups are chosen at random, they probably will be equivalent, so any difference in performance will arise from FFW’s work. (Most charities are oversubscribed so must ration their support anyway. If they ration it randomly, not only is it fair and transparent, but can scientifically identify the impact.)

This, of course, is a randomised controlled trial, and they’re important. Micro-loans to poor villages in north-east Thailand appeared to be having a positive effect when analysed simplistically using before and after comparators. But these analyses didn’t deal with selection bias in the people who took the loans. A careful, randomised study that did correct for selection bias and looked at how the specific people involved would have fared anyway, without the micro-loans. It found that the loans had no effect at all on the amounts that households saved, the time they spent working, or the amount they spent on education.

Without decent identification strategies, we risk wasting precious resources on programmes that don’t work. Selection effects are sometimes so strong that they mask the fact that the programme can do harm. The importance of using reliable comparisons is clear from the unusually ardent title of a medical journal editorial last year: “Blood on Our Hands: See The Evil in Inappropriate Comparators.”

There are of course instances when we can’t see or simulate what would have happened anyway — if Rosa Parks had changed her bus seat, or if Victorian philanthropist William Rathbone had not invented the UK’s system of district nurses. But in most cases we can — and if the claims of impact that you see don’t consider what would have happened otherwise, I suggest that you ask.